AI Equity: Why Equal Access is Creating a New Learning Divide

April 27, 2026

AI Support Infrastructure: What Educators Need for True Equity

May 4, 2026- The Regulation

- Risk-Based Architecture

- What December 2027 Requires

- Penalties

- Cross-Sector Implications

- From Gap to Governance

- Frequently Asked Questions

The enterprise compliance timeline for the EU AI Act has just completely shifted. Following the EU's May 7, 2026 Omnibus Agreement, the previous August 2, 2026 timeline has been extended, but the pressure on businesses to audit their systems and eliminate retroactive governance debt has officially arrived.

The Regulation That Rewrites the Rules

The EU AI Act is not GDPR for AI. It is fundamentally different in structure, scope, and mechanism. GDPR governs data. The AI Act governs systems — the decisions those systems make, the contexts in which they operate, and the organizational accountability structures that surround them.

The regulation applies extraterritorially, exactly like GDPR. Any organization whose AI systems operate in or affect EU residents is in scope, regardless of where the company is headquartered. A healthcare company in the Gulf, a financial services firm in the US, an educational technology provider in Southeast Asia — all are in scope if their systems process EU-resident data or affect EU-resident outcomes.

Three roles define regulatory responsibility:

Organizations that develop AI systems and place them on the EU market. Highest compliance burden — documentation, conformity assessment, and registration obligations apply.

Organizations that use AI systems in professional contexts affecting EU residents. Significant obligations including fundamental rights impact assessments, human oversight, and incident reporting.

Entities in the AI supply chain with defined obligations around market placement and compliance verification of upstream providers.

The Risk-Based Architecture

Understanding where your AI systems sit in the Act's risk taxonomy is the prerequisite for every compliance decision that follows.

Prohibited Systems — Effective February 2025

Eight categories are banned outright. Organizations operating any of these face immediate mandatory suspension — not a compliance timeline, a prohibition:

- Social scoring systems by public authorities

- Real-time remote biometric surveillance in public spaces (narrow law enforcement exceptions apply)

- AI exploiting vulnerabilities of individuals based on age, disability, or social situation

- Subliminal manipulation bypassing conscious awareness

- Emotion recognition in workplace and educational environments

- Biometric categorization inferring sensitive attributes (race, political views, religious beliefs)

- Predictive policing based on profiling

- Untargeted scraping of facial images from the internet or CCTV

- AI "nudifier" apps, non-consensual explicit deepfakes, and CSAM generation (Strict new prohibitions added via the May 2026 Omnibus Agreement, carrying maximum penalties of up to €35M or 7% of global annual turnover, taking full effect on December 2, 2026)

High-risk AI systems — Updated Compliance Timelines

Following the EU's May 7, 2026 Omnibus Agreement, compliance timelines have been extended to give enterprises a clear window to remediate governance debt. Standalone high-risk AI (Annex III) obligations now apply starting December 2, 2027, while embedded AI systems (Annex I) have shifted to August 2, 2028. This category remains where the primary compliance burden sits for most enterprises. High-risk AI systems include AI used in:

- Critical Infrastructure: Energy, water, transport, financial systems

- Education: Systems determining access to education or evaluating students

- Employment: CV screening, candidate ranking, performance monitoring, promotion decisions

- Essential Services: Credit scoring, insurance risk assessment, emergency services dispatch

- Law Enforcement: Risk assessment, polygraph-adjacent systems, evidence evaluation

- Migration and Border Control: Document verification, risk profiling

- Administration of Justice: Legal dispute assistance, case outcome prediction

- Democratic Processes: Influencing elections, voter targeting

Research from the EU AI Act compliance checker found that 33% of EU AI startups believed their systems would be classified as high-risk, compared to the 5–15% estimated by the European Commission. Organizations are systematically misclassifying their own systems.

The Article 6(3) Profiling Hard Stop: This pattern of misclassification is facing immediate regulatory correction. Under the 147-page draft guidance dropped by the EU on May 19, 2026, the Article 6(3) "low-risk filter" is completely void if an AI system performs individual profiling. If your tool automatedly tracks, scores, or evaluates human behavior, work performance, or health, there is no self-assessment loophole—your system is categorically High-Risk, and full infrastructure compliance is mandatory regardless of the timeline extensions.

Transparency Requirements

Chatbots, deepfakes, AI-generated content — these systems require disclosure to users that they are interacting with AI. The obligation is behavioral transparency, not technical documentation.

No Mandatory Obligations

Spam filters, recommendation engines, most AI tools used internally — no mandatory EU AI Act obligations, though voluntary codes of conduct are encouraged.

What the December 2, 2027 Deadline Requires

The compliance obligations for high-risk AI systems are specific, documented, and verifiable. Organizations must demonstrate, not assert, compliance.

Risk Management System

A continuous risk management process — not a point-in-time assessment. Includes identification of reasonably foreseeable risks, residual risk evaluation, post-deployment monitoring, and documented iteration as the system evolves.

Data Governance

Training, validation, and testing data must meet requirements for relevance, representativeness, freedom from errors, and completeness. The 34% of low-maturity organizations Gartner identifies as facing data availability and quality challenges are not yet ready for this requirement.

Technical Documentation

Comprehensive technical documentation created before market placement, covering system purpose, design choices, validation methodology, performance metrics, and limitations. Documentation assembled during an inspection is a compliance signal failure — regulators have learned to recognize it.

Transparency & Human Oversight

High-risk systems must be designed with sufficient transparency for deployers to interpret outputs correctly. Human oversight is not a UI checkbox — it requires organizational structures, training, and documented accountability for who carries oversight authority.

Accuracy, Robustness & Cybersecurity

Appropriate accuracy metrics, resilience against adversarial inputs, and cybersecurity protections. These require ongoing monitoring, not one-time testing.

Conformity Assessment

Certain high-risk systems require third-party conformity assessment by designated Notified Bodies. Notified body capacity is already constrained — organizations that have not secured assessment slots are now facing scheduling backlogs that compress their compliance runway.

The Penalties Organizations Are Now Facing

The EU AI Act's penalty structure exceeds GDPR's maximum fines and is structured across three tiers. For large enterprises, these are not theoretical numbers — they represent enterprise-level exposure that makes compliance infrastructure economically rational.

Beyond fines, the Act creates exposure through mandatory recalls, market access suspension, and civil claims from affected individuals for fundamental rights violations. National laws in several EU member states are extending AI Act non-compliance into potential criminal liability.

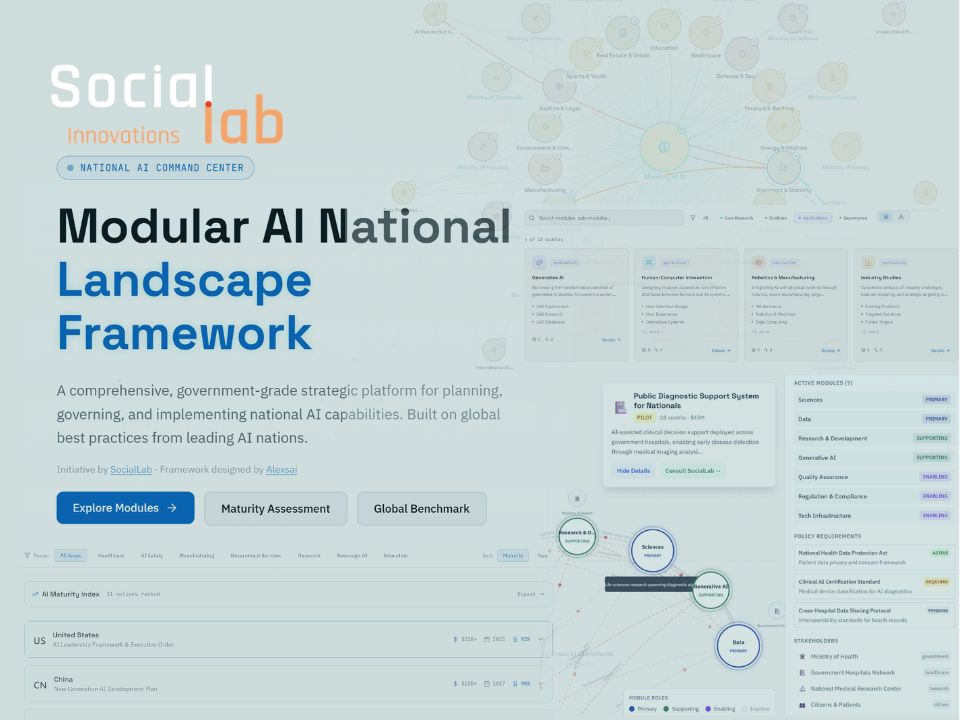

Cross-Sector Implications

The SYNLAB Precedent

SocialLab's work building the Intelligent Diagnostics platform with SYNLAB — an AI-powered diagnostic support system across EU countries — offers a direct precedent for what EU AI Act compliance requires in medical contexts. EU medical certification demanded governance infrastructure from the outset: complete audit trails for every AI-assisted decision, regulatory compliance frameworks before deployment, clear physician decision authority with AI in a support role, and continuous validation feedback mechanisms. Healthcare organizations that built governance infrastructure alongside AI capability avoided costly retrofitting.

Layered Compliance Complexity

Credit scoring, insurance underwriting, fraud detection — these are high-risk AI systems under the Act. Financial organizations face layered compliance: EU AI Act obligations intersecting with existing regulation (MiFID II, Basel III, DORA). The data governance requirements of Article 10 map to existing model risk management frameworks, but the human oversight requirements of Article 14 create new organizational accountability demands that most financial compliance functions haven't yet addressed.

Emotion Recognition Prohibition

Recruitment tools, CV screening platforms, student assessment systems — organizations using these tools as deployers carry fundamental rights impact assessment obligations. The EU AI Act's prohibition on emotion recognition in educational environments is particularly significant for edtech providers. As explored in AI in Education 2026: Closing the Governance Gap, educational institutions that built governance infrastructure proactively are positioned far better than those now facing mandatory retrofitting.

Synthetic Media & Disclosure

Generative AI systems creating synthetic media now carry mandatory disclosure obligations. Organizations using AI for content personalization, recommendation systems, or synthetic media generation face transparency requirements and, where these systems affect democratic processes, potential high-risk classification. SocialLab's work on disinformation detection confirms: the intersection of AI and information integrity is one of the Act's most consequential application domains.

From Gap to Governance: The Infrastructure Approach

The EU AI Act's compliance requirement is not a documentation exercise. It is a governance infrastructure question. The consistent finding across every sector where AI regulation has arrived: organizations that built governance infrastructure alongside AI capability achieved sustainable compliance. Those that prioritized tools over governance now face expensive, time-compressed remediation.

The 2026 AI maturity research we've covered previously is directly relevant here. Organizations overestimating their AI maturity tend to overestimate their compliance readiness for the same reason: they conflate technical deployment with organizational capability. An AI system running in production is not evidence of governance infrastructure. It may be evidence of governance debt.

Conduct an Honest AI Inventory

Most organizations do not have a complete picture of their AI deployments. The first compliance requirement is visibility — knowing which systems exist, who operates them, what decisions they influence, and which risk tier they occupy. This requires forensic analysis across the technology stack, not a survey of known systems.

Classify Accurately — and Conservatively

The natural organizational tendency is to classify AI systems at the lowest risk tier that can be justified. This is exactly backwards. The compliance and legal exposure of misclassifying a high-risk system as limited-risk dwarfs the operational burden of treating a limited-risk system with high-risk protocols. When classification is ambiguous, conservative treatment is the defensible position.

Build the Six Pillars of High-Risk Compliance

Organizations with high-risk systems must establish: a continuous risk management process, documented data governance practices, comprehensive technical documentation, human oversight structures with clear accountability, ongoing performance monitoring, and conformity assessment pathways where required.

Integrate Governance into the Development Lifecycle

The most expensive compliance failures occur when governance is added to systems already in production. The EU AI Act requires that documentation, risk management, and oversight mechanisms exist before deployment — not as retrospective additions. Organizations with AI systems in development have the advantage of building compliance infrastructure concurrent with capability. Those with legacy systems face the harder path.

The competitive reality is this: organizations that treat EU AI Act compliance as a governance infrastructure project — building systematic capability, assessing honestly, and progressing methodically — will achieve sustainable compliance and position themselves as trusted operators in a regulated AI market.

Organizations that treat it as a documentation exercise will face enforcement.

Governance built concurrent with deployment is sustainable. Governance retrofitted after deployment is expensive and often incomplete.

Frequently Asked Questions

Common questions about EU AI Act compliance, risk classification, and governance obligations in 2026.